MCP Servers Are the New Shadow IT for AI

Table of Contents

- Key Takeaways

- The Protocol Thats Quietly Wiring AI Into Everything

- A Quick Primer: How MCP Servers Work in Practice

- What Early MCP Adoption Is Teaching Us About Security

- Risk Patterns That Keep Showing Up

- Inventory First: You Cant Secure What You Cant See

- How Qualys TotalAI Detects MCP Servers

- Beyond Inventory: Mapping What Each Server Actually Exposes

- Graph-Based Relationship Mapping

- Security Assessment: Testing What Attackers Can Actually Do

- Practical Steps After You Find MCP Servers

- The Bottom Line

- Frequently Asked Questions (FAQs)

Key Takeaways

MCP servers are becoming the default wiring between AI agents and enterprise applications — but most organizations have zero visibility into where they are, what they expose, or how they can be abused. Qualys TotalAI now provides layered discovery of MCP servers across network, host, and supply chain perspectives, with security assessment capabilities actively in development. This post covers the MCP landscape, why it matters for security, and what we’re building.

The Protocol That’s Quietly Wiring AI Into Everything

The AI agent landscape has shifted over the past year. AI systems are evolving beyond traditional chatbots to active participants deeply integrated in enterprise workflows, where they can interact with tools, systems, and infrastructure to observe, decide, and act in real time.

The technology making this possible is the Model Context Protocol (MCP), an open standard introduced by Anthropic in late 2024 that defines how AI applications connect to external tools and data sources. Think of it as a universal adapter — a structured, JSON-RPC-based bridge that lets an AI agent ask an MCP server: “What tools are available?” and then invoke those tools on demand.

Adoption has been rapid, with over 10,000 active public servers within a year. Major organizations such as OpenAI, Google DeepMind, and Microsoft have incorporated the standard. For enterprises, this means MCP servers are likely already present in their environments. The focus is on understanding where they are, what they connect to, and the potential risks associated with their use.

A Quick Primer: How MCP Servers Work in Practice

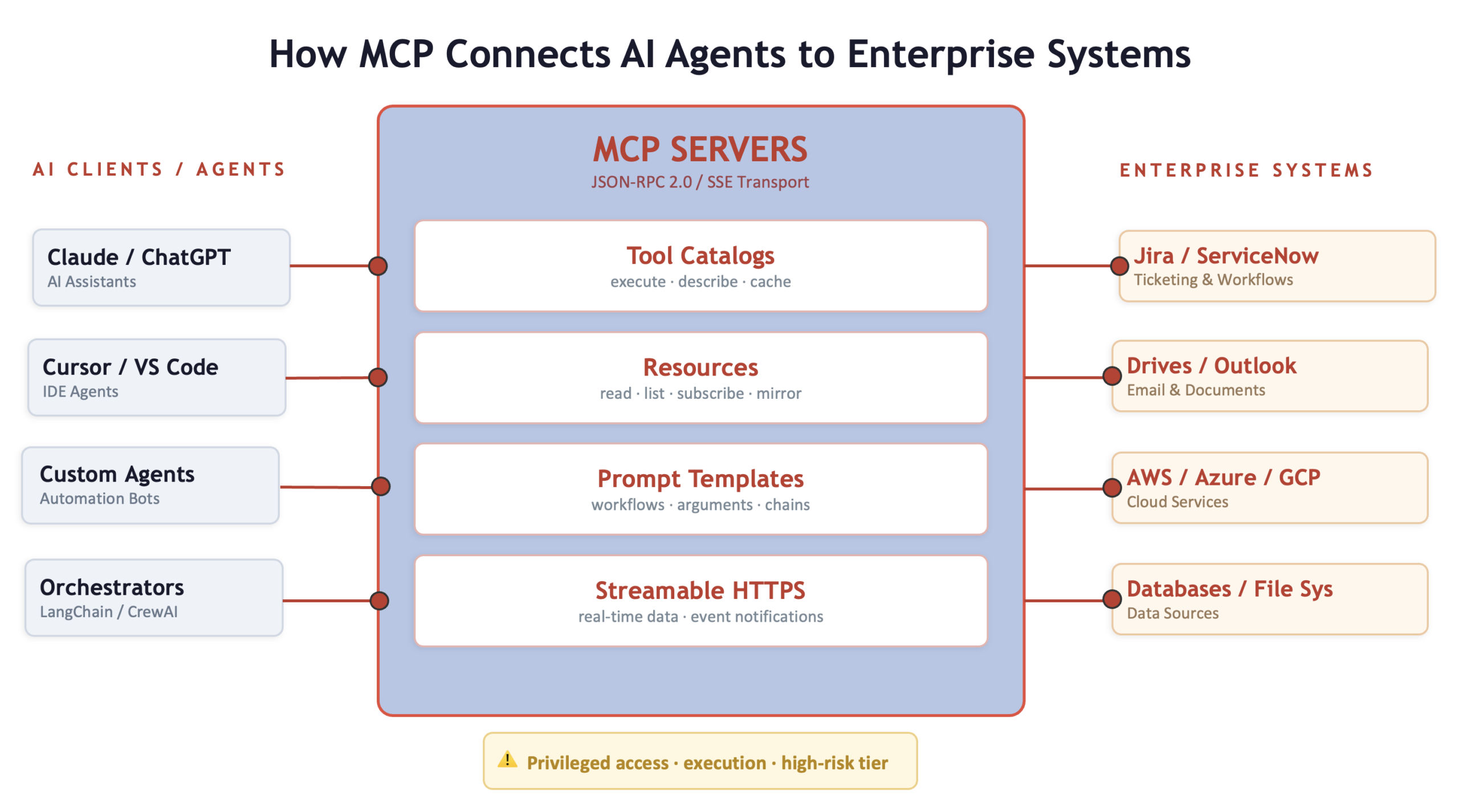

Before getting into the security side, it helps to understand the mechanics. An MCP server sits between an AI client, such as an automation agent or orchestration framework, and the enterprise systems it interacts with. The communication follows a predictable pattern:

1. Tool Discovery – occurs when an AI client or AI Agent connects to an MCP server and requests its supported capabilities. The server returns structured descriptions of available tools, resources, and prompts, enabling the client to reason about which actions are possible and how to invoke them.

2. Invocation – occurs when an AI client decides to act and invokes the appropriate tool through the MCP server. The server carries out the request against the underlying system and returns the result to the client.

3. Streaming – allows an AI client to receive ongoing updates from an MCP server as state changes occur. Through streaming responses, the server can continuously deliver new information, enabling the client to stay in sync with real‑time data and events.

MCP servers can expose a range of capabilities, including catalogs of tools, access to resources such as files or metrics, and predefined prompts for common workflows. Many also support streaming responses, allowing AI clients to receive live or incremental updates as underlying state changes.

This architecture is powerful. It is a new integration tier sitting between your AI stack and your internal systems. Real‑world actions such as creating records, triggering workflows, or modifying configurations are performed by invoking tools exposed by an MCP server. MCP defines how those capabilities are described and called, while the underlying systems determine the actual behavior.

What Early MCP Adoption Is Teaching Us About Security

As the MCP ecosystem has grown, a clearer picture is emerging of how these servers are being built and deployed in practice.

The important lesson is how MCP changes the security model in GenAI and agentic applications.

Unlike traditional services, MCP servers sit at the intersection of natural‑language reasoning and privileged execution. Capabilities are described in human‑readable terms, discovered dynamically by AI clients, and invoked autonomously based on context rather than fixed call paths. This means familiar risks can manifest in new ways. For example, an overly broad tool description or ambiguous parameter schema can cause an agent to select or chain tools in unintended ways, even when the underlying APIs behave as designed.

Similarly, MCP servers often expose powerful operations—such as file access, configuration changes, or workflow triggers—behind a single integration point. A weakness like over‑scoped credentials or insufficient input validation may look routine in isolation, but when combined with agent‑driven invocation, it can enable actions to be taken across systems without explicit human intent at each step.

MCP also expands trust boundaries in subtle but important ways. Discovery metadata, tool descriptions, and even error messages become part of the model’s decision‑making context, not just static configuration. At the same time, MCP servers frequently act as shared bridges between agents and multiple downstream systems, amplifying the impact of insecure defaults or reused reference patterns.

The result is a new execution model where existing issues are easier to trigger, harder to reason about, and more likely to propagate as examples are copied across the ecosystem. Securing MCP therefore requires treating these servers as AI‑driven control planes, not just another API layer.

A Maturity Gap

MCP servers are increasingly acting as privileged execution environments, bridging AI agents to file systems, APIs, developer tools, and cloud infrastructure. Many early MCP servers are thin wrappers around existing APIs or web services that were originally designed to be invoked programmatically and deterministically. Exposing these interfaces through MCP shifts the decision of what to call and when from fixed application logic to LLM‑driven reasoning, introducing new security challenges around intent, scope, and control that traditional API designs were never built to accommodate. Treating them as production services, subject to the same identity, input validation, and least privilege expectations as any other application, is quickly becoming table stakes.

As the ecosystem matures, these early lessons are likely to shape better defaults, stronger reference implementations, and clearer guidance for developers. The opportunity now is to internalize these lessons before MCP becomes invisible infrastructure assumed to be safe by default.

Risk Patterns That Keep Showing Up

- Capability discovery as reconnaissance – Even a read-only MCP endpoint can leak internal system names, tool schemas, resource paths, and namespaces. For an attacker, this provides reconnaissance that lowers the cost of targeted intrusion.

- Tool invocation as an execution surface – MCP tools can open tickets, trigger deployments, run database queries, and change configurations. If tool invocation can be influenced, either directly or through prompt injection, it effectively grants control over the actions the MCP server can perform.

- Supply chain exposure – MCP implementations are built on rapidly evolving SDKs and open-source projects. Protocol updates are frequent and internal forks accumulate custom patches. This pattern reflects the same supply chain dynamics the industry has dealt with for years, now applied to an AI integration layer.

Inventory First: You Can’t Secure What You Can’t See

This is where most security conversations about MCP need to start. Before hardening, testing, or governing MCP servers, organizations need to identify them. And that is harder than it sounds.

MCP services dodge traditional visibility for several reasons. They sometimes bind to localhost instead of network interfaces, can be configured to listen on random high ports, and can be hidden behind reverse proxies or API gateways. Some MCP services are built into IDE plugins and developer tools. Moreover, certain services initially deployed for quick testing were never decommissioned, while others became production dependencies without proper approval. The result is a familiar pattern: a growing set of unmanaged integration points, each with privileged access to internal systems, collectively representing an attack surface that existing security tools were never designed to detect.

An accurate MCP inventory answers fundamental governance questions: Where are MCP servers running? Who owns them? What tools and resources do they expose? Who can reach them? Without this baseline, every downstream security activities such as hardening, monitoring, and incident response remain reactive rather than deliberate.

How Qualys TotalAI Detects MCP Servers

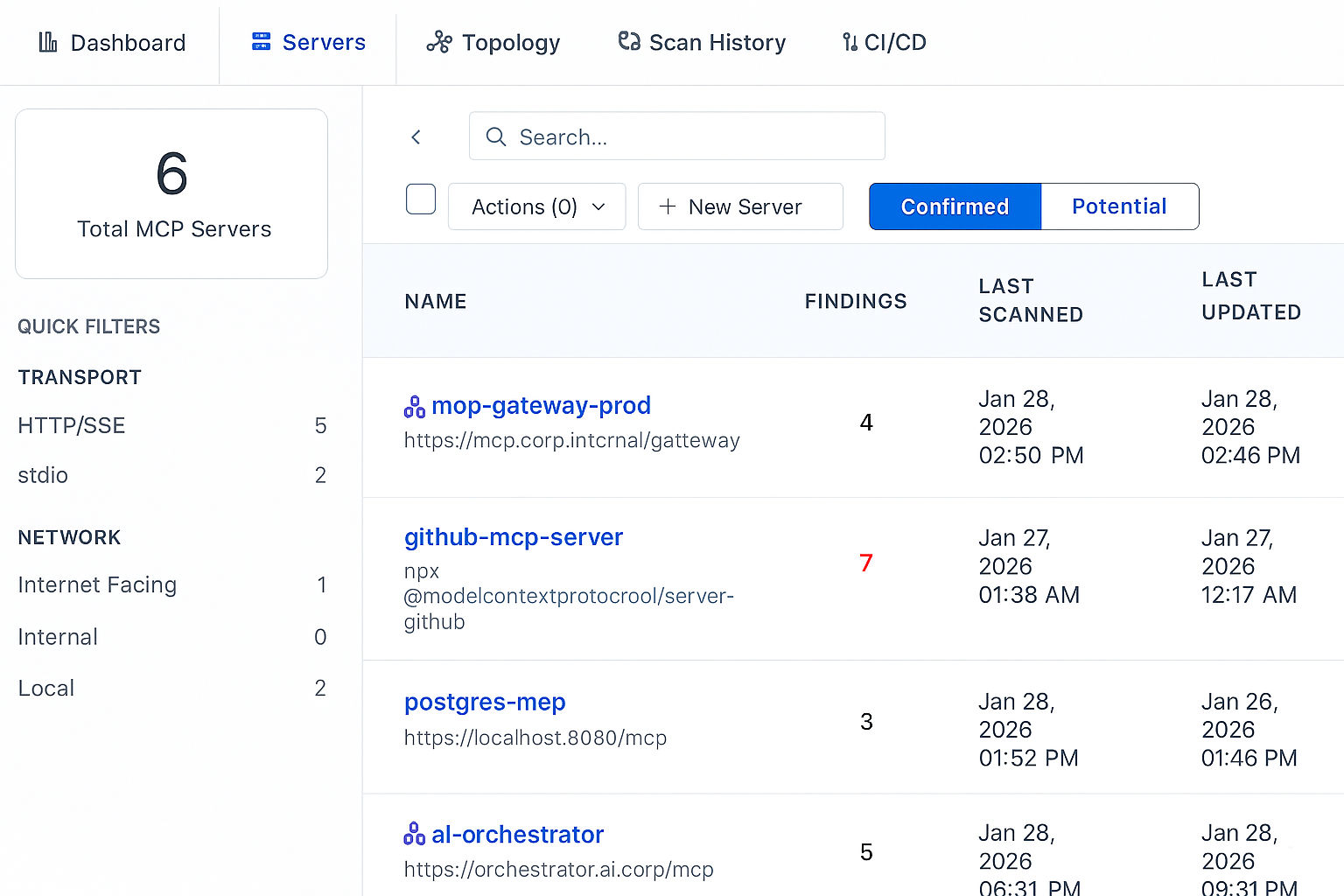

We designed our detection approach around a simple principle: no single detection layer is sufficient. MCP detection is treated as a layered exercise rather than a single technical check.

At the network level, externally reachable MCP services can often be inferred by probing common MCP endpoints and looking for protocol‑specific behavior, such as characteristic headers, structured capability responses, etc. On their own, these signals are not sufficient, but they provide useful starting points.

At the host level, inspection identifies MCP servers that are only accessible locally or run behind internal gateways. Runtime indicators confirm whether MCP is in use, even when nothing is exposed on the network.

At the supply chain level, dependency analysis indicates that MCP adoption occurs earlier in the lifecycle. The presence of MCP SDKs in application code often signals future deployment and helps explain how MCP capabilities spread across teams and services.

Individually, these signals are incomplete but when combined, they provide a more accurate picture of where MCP is present, how it is used, and where closer scrutiny is required.

Beyond Inventory: Mapping What Each Server Actually Exposes

Finding MCP servers is only half the problem. The other half, and arguably the more valuable part, is understanding what each server exposes. Once an MCP endpoint is confirmed, the next scan phase pulls a full capability map.

For each confirmed MCP server, we extract a structured inventory of its capabilities. This includes the full tool catalog with names, descriptions, and input schemas; exposed resources with their URIs and MIME types; prompt templates along with their arguments and intended workflows; resource templates that define dynamic URI patterns; and filesystem roots the server can reach. We also capture server metadata,including name, version, protocol version, declared capabilities, and embedded instructions.

This is not just an asset list. Each element carries context. A tool’s input schema shows what parameters it accepts and what impact a crafted input could have. A resource URI indicates which internal systems the server connects to. A prompt template reveals pre-built workflows and underlying trust assumptions.

Graph-Based Relationship Mapping

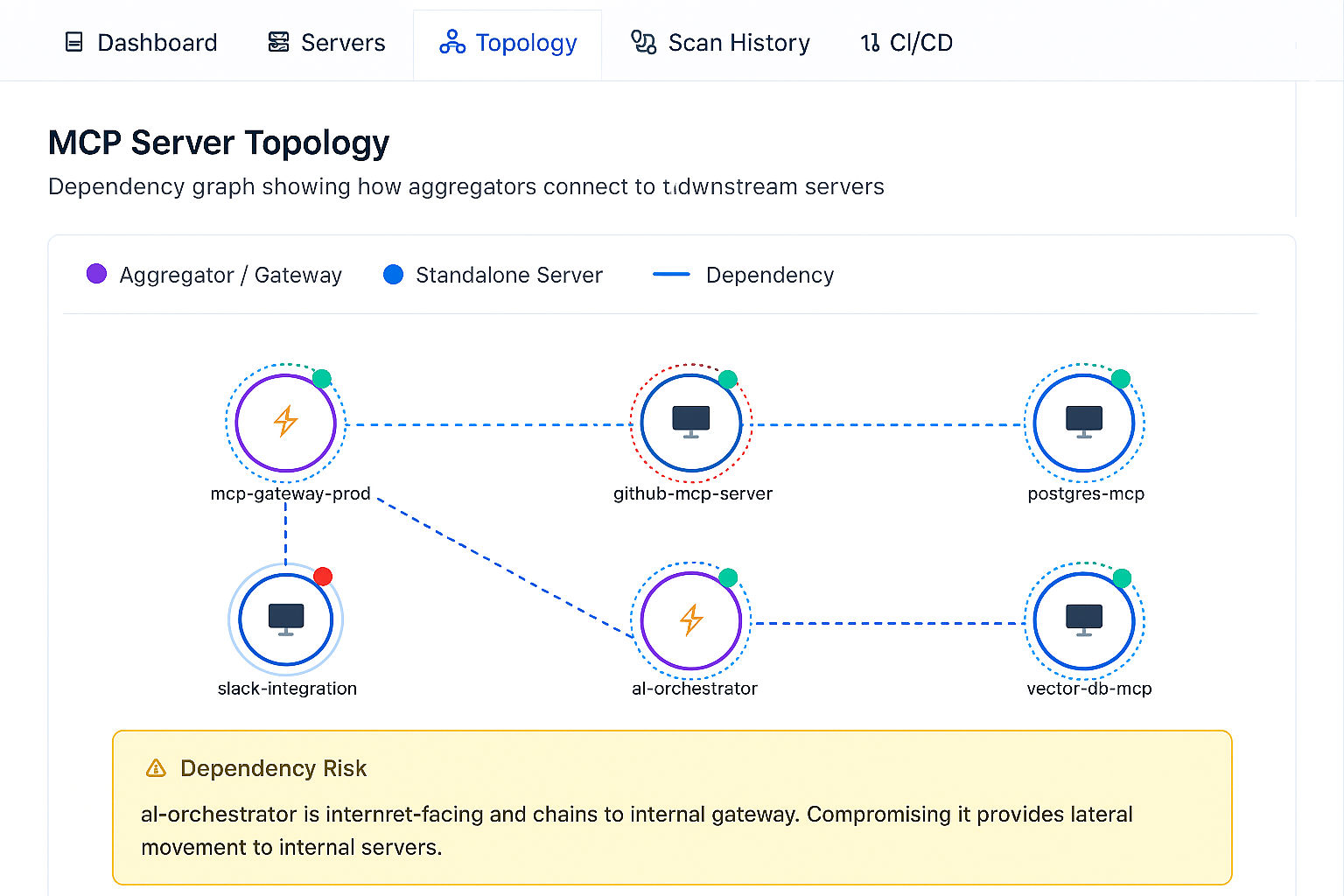

Raw lists are useful but limited. A clearer picture emerges by mapping relationships between MCP servers, their capabilities, the internal systems those capabilities interact with, and the AI clients consuming them. In Qualys TotalAI, this is modeled as a graph, where each MCP server is a node connected to its exposed tools, resources, and downstream systems.

This graph reveals what flat inventories cannot which servers have overlapping access to the same internal systems; which tools create transitive trust paths between otherwise isolated environments, where a single compromised server enables lateral movement, and which prompt templates chain multiple tools in ways that amplify blast radius.

Security Assessment: Testing What Attackers Can Actually Do

Discovery and inventory are the foundation. The next question is what an attacker can do with the MCP servers you’ve identified. Our security assessment capabilities, which are currently under active development, are designed to address this need. MCP security checks span a broad range of threat categories identified through both internal research and findings from the wider security community, including risks such as tool poisoning and credential exposure.

Beyond these core areas, coverage continues to expand to include deeper validation of resource integrity, enforcement of authentication, identification of data exfiltration paths, assessment of path traversal risks, and detection of embedded secrets. This approach is intentionally iterative, evolving as new attack patterns emerge alongside the growing adoption of MCP.

Practical Steps After You Find MCP Servers

Detection is the beginning, not the end. When an MCP server appears in your inventory, a structured response process should follow. Based on our work with early adopters, this is the operational playbook:

- Clarify ownership and intent

Is this a developer experiment that became persistent, or a sanctioned production integration? Knowing who built it, who maintains it, and what tools it exposes is foundational. - Map the exposure

Is the server reachable beyond localhost? Is it internet-facing? Does the network segmentation around it match the access level it holds? Many MCP servers start as internal tools and gradually become accessible from unexpected locations. - Lock down authentication

Enforce authentication on every endpoint, separate discovery privileges from invocation privileges, and use least-privilege tokens for downstream integrations. The Astrix research showing 53% of servers rely on static secrets underscores the extent of the exposure. - Add observability

Log capability discovery and invocation events. Monitor for enumeration spikes. Alert on unusual SSE connection patterns. MCP activity should be visible in your security operations workflow, not hidden in application logs. - Harden the surface

Strip verbose identity banners, rate-limit discovery endpoints, and enforce strict JSON-RPC schema validation. Every detail an MCP server exposes reduces the effort required for an attacker.

The Bottom Line

MCP is quickly becoming the connective tissue between AI systems and enterprise systems. Within a year, it has moved from a niche experiment to Linux Foundation governance, with adoption across every major AI platform and toolchain. That is not a trend that will reverse.

For security teams, the right response is not to block MCP adoption. It is to apply the same discipline used for any privileged integration tier: inventory early, detect reliably, test aggressively, and govern deliberately.

Qualys TotalAI provides the foundation for that approach. MCP server discovery across network, host, and supply chain perspectives is available today. Capability mapping provides visibility into what each server exposes. Security assessment, covering tool poisoning, injection attacks, credential exposure, agent deception, and more, is actively coming online.

MCP has become the next phase of API sprawl. Disciplined inventory and protocol-aware security testing will define what responsible AI enablement looks like. We would rather our customers be ahead of that curve than behind it.

Ready to see it in action? Start a trial and discover how Qualys TotalAI helps you identify, map, and assess MCP servers across your environment.

Frequently Asked Questions (FAQs)

What are MCP servers, and why are they a security risk?

MCP servers act as an integration layer between AI clients and enterprise systems, enabling access to tools, resources, and data. They increasingly function as privileged execution environments, bridging AI agents to file systems, APIs, developer tools, and cloud infrastructure. The risk arises because they often operate with broad privileges, rely on weak credential models such as long-lived static secrets, and expose capabilities that can be misused through tool invocation, prompt injection, or insecure implementations.

Why are MCP servers called the new shadow IT for AI?

MCP servers frequently evade traditional visibility. They bind to localhost, run on random high ports, sit behind proxies, or exist within developer tools and plugins. Many are deployed as experiments and later become production dependencies without formal approval. This results in a growing set of unmanaged integration points with privileged access to internal systems, similar to shadow IT.

How are MCP servers different from traditional web application servers?

MCP servers sit at the intersection of natural‑language reasoning and privileged execution. Their capabilities are described in human‑readable terms, discovered dynamically by AI clients, and invoked autonomously based on context rather than fixed call paths. This flexibility introduces familiar risks that can manifest in new ways. For example, an overly broad tool description or an ambiguous parameter schema can lead an agent to select or chain tools in unintended ways, even if the underlying APIs function as designed.

How does Qualys TotalAI detect MCP servers?

Qualys TotalAI uses a layered detection approach. At the network level, it probes MCP endpoints and identifies protocol-specific behavior. At the host level, it inspects systems to detect locally accessible MCP servers and confirms runtime usage. At the supply chain level, it analyzes dependencies to identify MCP SDK usage in application code. These combined signals provide an accurate view of where MCP is present and how it is used.

How to secure Model Context Protocol servers?

Securing MCP servers requires a structured approach: identify and inventory servers, clarify ownership and intent, map exposure, enforce authentication on all endpoints, separate discovery and invocation privileges, and use least-privilege tokens. Add observability by logging discovery and invocation events and monitoring for anomalies. Harden the surface by limiting exposed information, enforcing strict validation, and controlling access.

What is the risk of credential exposure in MCP servers?

A significant number of MCP servers rely on long-lived static secrets such as API keys. These credentials are difficult to rotate, easy to over-scope, and often propagate across projects. This creates systemic risk, as exposed or misused credentials can provide access to downstream systems and services connected through MCP.