How I Knocked Down 30 Servers from One Laptop

Following the release of the slowhttptest tool with Slow Read DoS attack support, I helped several users test their setups. One of the emails that I received asked me to take a look at test results of the slowhttptest tool. According to the report, the tool brought a service to its knees after only several seconds. This was quite surprising because the system in question was designed to handle requests from several thousands of clients from all across the world, whereas the tool was only opening 1000 concurrent connections.

To put things in context it’s worth mentioning that the organization has around 60,000 registered users with a client application installed on their end. That application communicates periodically to the central service, receiving instructions and reporting the results in short bursts (no, it’s not a botnet as you might have thought!). There are around 2,000 running clients at any given time, and several hundreds of them are normally connected concurrently.

System Architecture

Initially, I was pretty skeptical about being able to DoS the central service, so I asked for details of the system architecture. The system design followed best practices – the components of the system were being monitored, and all patches were applied in a timely manner. Thus, it was reasonable to expect that the system should have been able to tolerate the load produced by the slowhttptest tool.

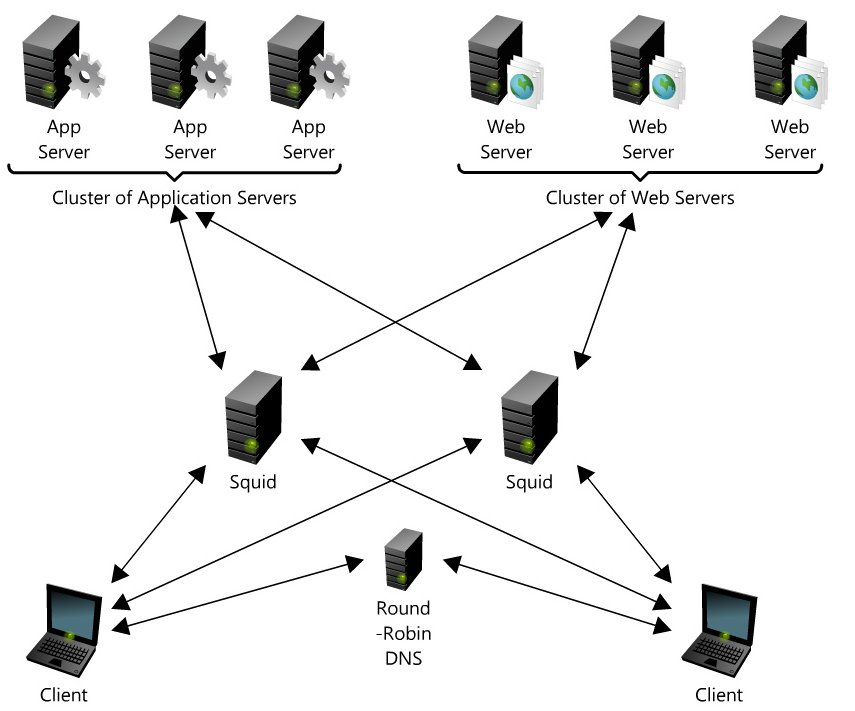

Here is the schematic view of the system below:

- A round-robin DNS acting as load balancer that resolves the hostname to one of the Squid’s IP addresses

- Two firewalled reverse proxy servers (Squid) running on powerful dedicated servers

- A cluster of web servers

- A cluster of application servers

I ran dig, a DNS lookup utility, to get the list of IP addresses the hostname can be resolved to. After picking one, I added a line to my /etc/hosts mapping hostname to that particular address and concentrated on attacking a single proxy, rather than spreading the load among all of them. I then configured the slowhttptest tool in an aggressive manner to create the “Slow Read” request of a photo of one of company executives on “Management Team” section of the website.

Results

Guess what? I got a true denial-of-service by rendering the front-end Squid machine inoperable. This effectively meant that I knocked out tens of backend HTTP servers from a single laptop. After further investigation, the DoS turned out to be a simple misconfiguration. Since the operating system was configured to limit a process to 1024 open file descriptors, the Squid simply ran out of the file descriptors.

It was assumed that if a server can handle 60,000 connections per minute, it’s good to go. However, the aspect of connection duration was overlooked, and the system was able to accept, serve and close the connection so fast that the concurrent connections pool was never filled up. Thus, system thresholds were never reached.

Moral

The moral of the story, aside from “Good beats Evil,” is that the security level of a system is defined by the weakest link in the chain. This is another example of security being more than just installing patches. In this particular case, the mistake could have been prevented with proper penetration and stress testing. You should not rely on “secure” architecture, and you should test for potential problems like this slow read DoS.

One more anecdote: Open Transport Tycoon Deluxe, an open source simulation game, was found to be vulnerable to a slow read DoS attack which prevented users from joining the server. The developer community for openTTD reacted very fast and released patches fixing the problem, and even reported the vulnerability to http://cve.mitre.org/. It’d be nice to see other developers taking DoS problems that seriously.

Update, January 28 2012:

Released slowhttptest v 1.4, featuring virtually unlimited connections.

Well done report….! 8-)