The New Era of Application Security: Reasoning-Based Agents, Runtime Reality, and Risk Intelligence

Table of Contents

- Introduction

- The Rise of Reasoning-Based Vulnerability Detection

- Application Risk Is Bigger Than Source Code

- Why Source Code Analysis Alone Cannot Secure Applications

- The Attack Surface Problem

- Runtime Reality vs. Source Code Theory

- Exploitability, Context, and Prioritization

- The Hidden Risks of Reasoning-Based Security Agents

- How Qualys TotalAppSec Leverages AI Differently

- The Future of AI in Application Security

- Frequently Asked Questions (FAQs)

Key Takeaways

- AI reasoning systems improve vulnerability detection in source code, but do not address the full spectrum of application security risk.

- Modern application security must account for APIs, runtime environments, and externally exposed assets beyond the source repository.

- Continuous discovery of internet-facing applications and APIs is essential to reduce exposure of the unseen attack surface.

- Runtime testing is required to validate exploitability, detect misconfigurations, and confirm whether security controls work in production.

- Effective application security programs integrate AI with attack surface discovery, runtime validation, and risk-based prioritization to manage real application risk.

Introduction

Application security is entering a new phase. It is now an AI problem, an API problem, and a runtime risk problem.

Artificial intelligence in application security is reshaping the category from both directions. It is improving how teams detect vulnerabilities through code reasoning, automated analysis, and faster testing. At the same time, it is expanding the application attack surface through AI-enabled features, model-connected APIs, new data flows, and runtime application security risks that many security programs were not built to govern. The teams that succeed in this new era will not treat AI as a shortcut. They will use it as a force multiplier inside a disciplined application security program.

This shift is already visible. Anthropic’s Claude Code Security is designed to reason through codebases and surface complex vulnerabilities with suggested fixes. OpenAI’s Aardvark, now updated to Codex Security, operates as an agentic security researcher that analyzes repositories, builds project context, prioritizes findings, and validates issues before proposing fixes.

For application security leaders, the tension is real. Do you slow AI adoption because it introduces new risk, or lean in because the scale and speed of modern software delivery demand more automation? The real choice is neither outright resistance nor blind acceleration, but the disciplined integration of AI capabilities into established application security practices.

That distinction matters because application security is not the same as code security. Reasoning-based systems represent meaningful progress in source code analysis. But real application risk extends beyond the repository into exposed APIs, forgotten internet-facing assets, runtime misconfigurations, third-party scripts, malware, and the behavior of live systems in production.

The future of application security will be defined by how well organizations apply AI across the full spectrum of application risk without losing visibility, evidence, or control.

The Rise of Reasoning-Based Vulnerability Detection

Over the past year, the application security market has begun moving beyond traditional code scanning toward reasoning-based vulnerability detection.

These emerging systems do more than match known weakness patterns or flag rule violations. They attempt to analyze how software behaves, how trust boundaries are crossed, how data moves through an application, and where real exploit paths may exist. In effect, they operate less like static checkers and more like human security researchers.

Anthropic’s Claude Code Security illustrates this shift. Announced in February 2026, it scans codebases for vulnerabilities, surfaces issues that traditional methods may miss, and proposes targeted patches for human review. Anthropic also positions it as particularly effective for complex flaw classes such as business logic errors and access control weaknesses.

OpenAI has taken a similar step. What began as Aardvark in October 2025 has evolved into Codex Security, which entered research preview in March 2026. OpenAI describes Codex Security as an application security agent that builds project context, creates an editable threat model, identifies complex vulnerabilities, and proposes fixes aligned with system behavior. Where supported, it can also validate findings in sandboxed environments to distinguish signal from noise.

This marks a real shift in vulnerability detection.

“Traditional static analysis relies on predefined rules, signatures, and known code patterns. That model still has value, but it often struggles with flaws shaped by execution context, business logic, chained conditions, or subtle authorization failures.”

Reasoning-based systems attempt to move past these limits by modeling software behavior more contextually. They trace control flow, follow data propagation, examine component interactions, and infer how an attacker might abuse the system. This raises the ceiling on what automated code analysis can detect, especially in large and fast-moving codebases.

But it is still only one layer of application security.

Even the most advanced reasoning systems remain focused on code and project context. Application risk extends further into exposed APIs, shadow services, runtime misconfigurations, third-party scripts, client-side malware, and the business context that determines whether a weakness is actually material.

Application Risk Is Bigger Than Source Code

Source code security matters. Flaws in business logic, input validation, session management, and authentication flows can lead directly to compromise. However, application risk rarely remains confined to what exists inside the source repository.

“A mature application security program must answer a broader question:

What is actually running in production, what is exposed, and how does it behave under real-world conditions?”

That is the gap between code security and application security.

Why Source Code Analysis Alone Cannot Secure Applications

Source code analysis identifies vulnerabilities within application logic, but it cannot observe how applications behave once deployed.

Runtime infrastructure, API exposure, cloud configuration, and authentication workflows frequently introduce vulnerabilities that do not appear in the repository itself. Effective application security programs, therefore, combine code analysis with runtime testing, attack surface discovery, and exploit validation.

The Attack Surface Problem

Many application security failures do not begin with a missed bug in code review. They occur because organizations lose visibility into what is exposed.

Legacy applications remain internet-facing long after they are deprecated. Test APIs are never decommissioned. Forgotten subdomains remain reachable. Administrative interfaces become publicly accessible. Cloud services, ephemeral workloads, and automated deployment pipelines expand faster than traditional governance mechanisms can track.

An organization can analyze every repository in its Git environment and still miss a significant portion of real exposure if it does not continuously discover what is running across its environment.

This is where reasoning-based code analysis reaches its limit. An AI system can reason about code only if the application is known and brought into scope. If security teams do not know an asset exists, it will not be scanned, tested, or secured.

Attack surface discovery is not optional. It is foundational.

Runtime Reality vs. Source Code Theory

Runtime conditions frequently introduce security failures that remain invisible during code analysis. Several categories illustrate this distinction.

- Deployment misconfigurations are a common example. Insecure CORS policies, exposed debug modes, weak TLS configuration, reverse proxy errors, cache poisoning conditions, and missing security headers often appear only in deployment environments.

- Abuse and resilience testing is another example. Security teams need to know whether rate limits hold under pressure, whether authentication controls can be bypassed, whether weak credentials are accepted, and whether business workflows can be abused. These questions require interaction with live systems.

- Authorization and ownership failures illustrate the problem clearly in API environments. Static analysis may confirm that an authorization check exists. It often cannot prove whether ownership enforcement works correctly in real transactions.

- Broken Object Level Authorization, the top issue in the OWASP API Security Top 10, typically requires active testing against a running application to confirm exploitability.

Exploitability, Context, and Prioritization

Security teams do not remediate every discovered vulnerability equally. Effective programs prioritize remediation based on what is exploitable, exposed, and materially important.

A meaningful risk decision requires multiple dimensions of context:

- Runtime exploitability: Is the issue reachable in a live system?

- External attack surface: Is the asset internet-facing?

- Regulated data exposure: Does it involve sensitive data?

- Business impact severity: Is the affected system business critical?

- Threat intelligence correlation: Does the weakness align with threat activity? Does the weakness align with threats in the wild?

Even if source code reasoning can identify potential flaws, it does not, by itself, produce this multidimensional risk picture.

The Hidden Risks of Reasoning-Based Security Agents

Reasoning-based security agents are a real step forward, but they also raise new operational considerations that security leaders must address.

Unlike traditional scanners, reasoning systems rely on model inference. Outputs can be non-deterministic. The same codebase may produce different findings as models evolve or as the surrounding context changes. Model updates, code comments, documentation, or prompt context may influence the reasoning path and affect outputs. Training data sources may also be opaque, raising questions about coverage, bias, and explainability. Even infrequent model hallucinations can reduce developer confidence if results cannot be validated with evidence.

For application security teams, that creates practical concerns.

- Is detection explainable, reproducible, auditable, and trusted by developers?

- Is customer code used to retrain models?

- How are customer code and scan data stored, processed, and protected during model analysis?

- Does the system reliably support every framework in the environment?

- How are false positives handled and measured?

- How are findings validated for exploitability in a live environment?

- What is the time-to-remediation workflow?

Reasoning improves source code analysis but addresses only one layer of application security. That is why modern application security cannot stop at code repository reasoning. It has to extend into runtime testing, attack surface discovery, exploit validation, or business-context prioritization across the full application estate.

Once AI enters the security workflow, it becomes part of the attack surface. Data poisoning, data exposure, prompt manipulation, workflow abuse, intent drift, over-reliance on automated results, and trust exploitation must now be incorporated into modern application security threat models.

How Qualys TotalAppSec Leverages AI Differently

Qualys TotalAppSec takes a complementary approach to AI. Rather than positioning AI as a black-box replacement for established application security testing, it applies AI where it can measurably improve signal, speed, and coverage across the broader application risk lifecycle, not just inside source code analysis.

An AI-powered application risk management platform for modern web applications and APIs, TotalAppSec combines continuous discovery, automated risk assessment, and remediation across on-premises, multi-cloud, API gateways, containers, and microservices. The solution includes API security with OWASP API Top 10 coverage, endpoint discovery, and runtime testing. It focuses on dynamic assessment of running applications through automated crawling and testing to detect runtime vulnerabilities, misconfigurations, and compliance drift, while continuously identifying known, unknown, forgotten, rogue, and shadow web apps and APIs.

AI-Powered Web Application Scanning

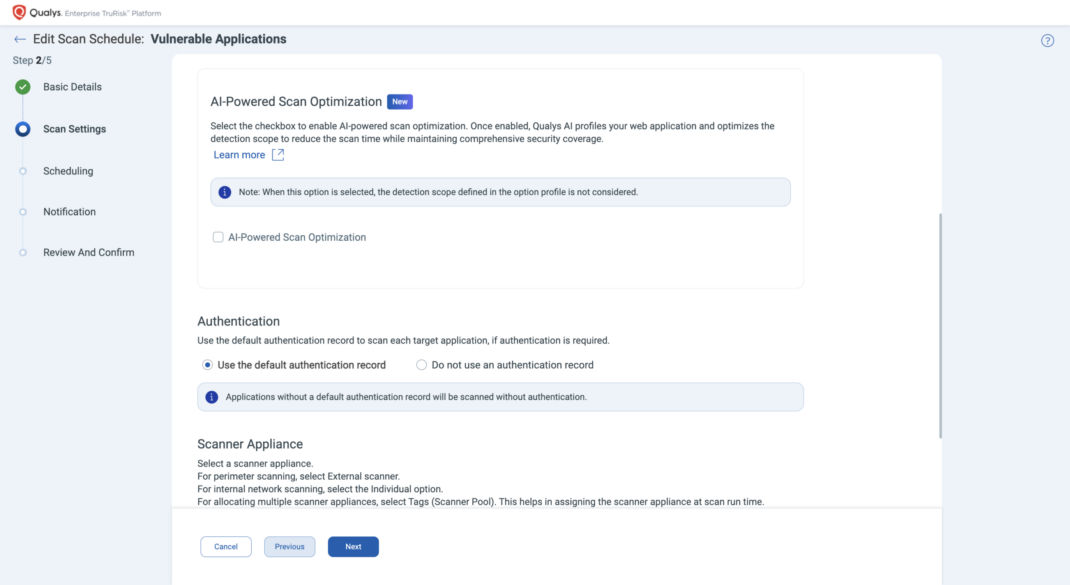

One clear example is AI-Powered Scan Optimization. It uses AI-assisted clustering of QIDs to streamline detection scope, reduce unnecessary checks, and focus scans on the most relevant high-risk areas in the target environment.

The result is faster scan completion, less noise, and more efficient vulnerability assessment across large web app and API estates, while preserving targeted coverage.

The AI-powered Scan Optimization option is available in both the launch vulnerability scan and create vulnerability scan schedule workflows through the Select Checkbox option in the Vulnerability Scan > Scan Settings page.

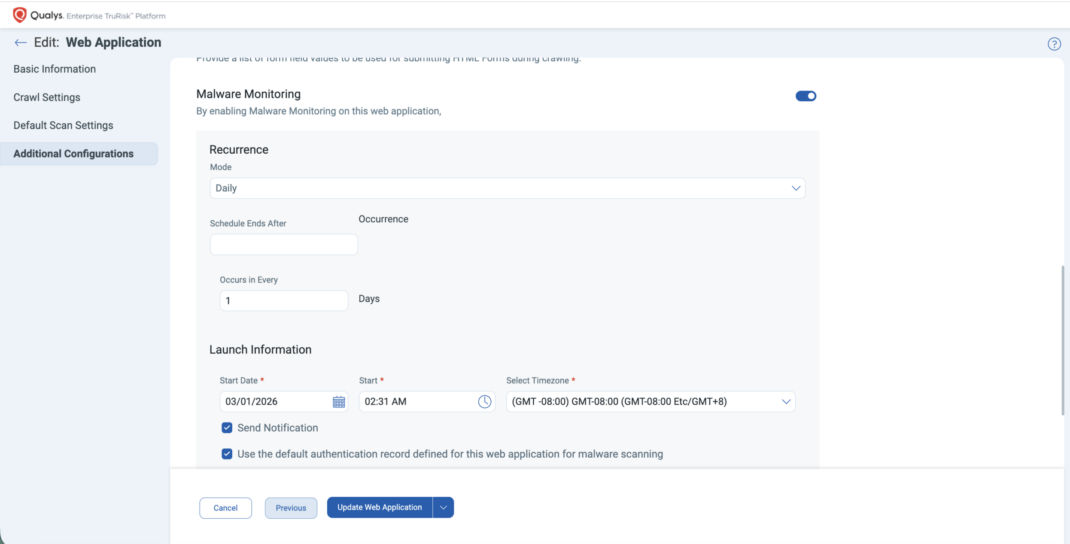

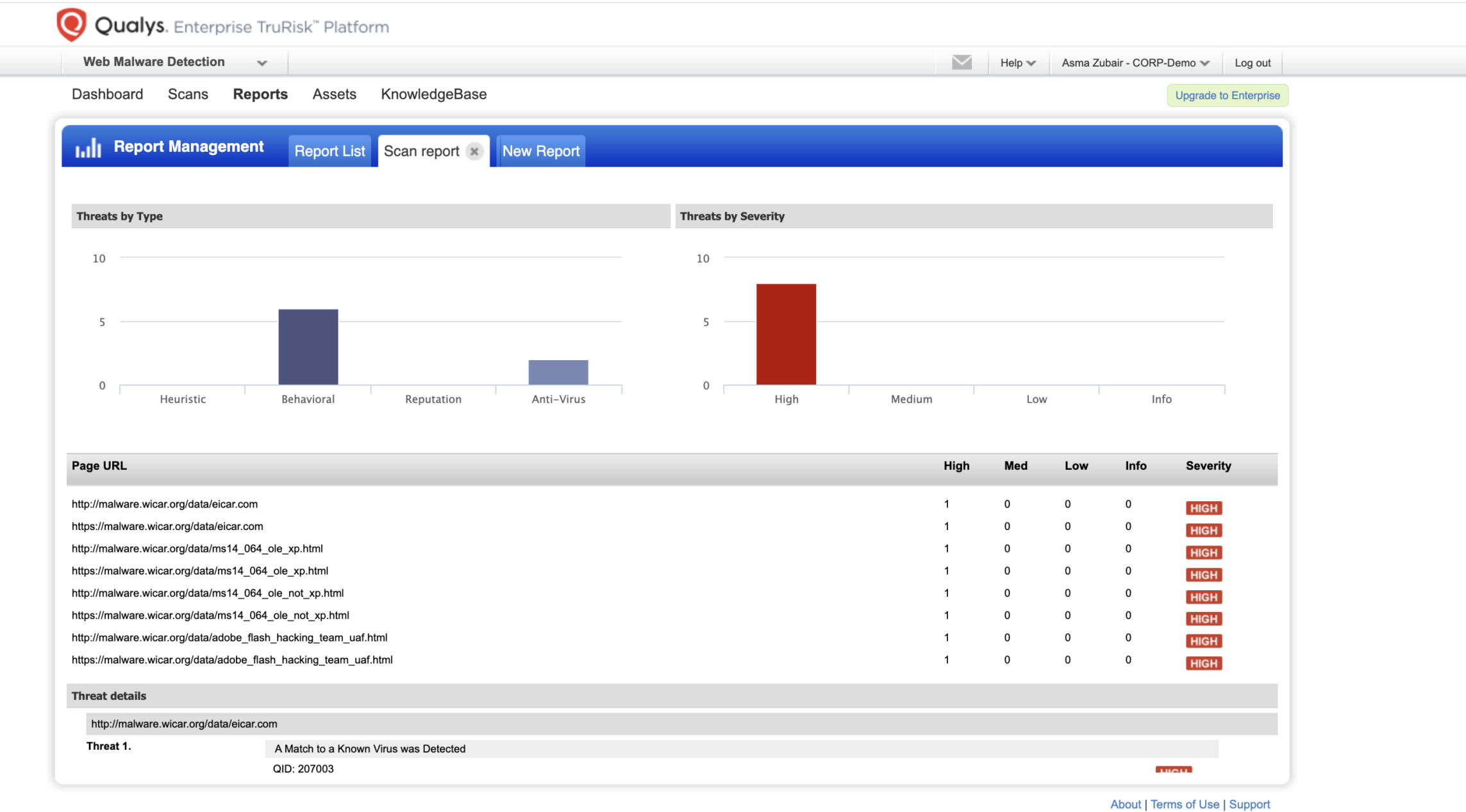

Deep Learning-Powered Web Malware Detection

TotalAppSec also uses AI in an area where static analysis alone cannot adequately cover: Client-side malware detection.

Qualys’ deep learning-powered Web Malware Detection and Monitoring uncovers malicious JavaScript behavior that traditional server-side analysis and signature-based detection might miss, potentially exposing sensitive data.

By leveraging deep learning, web malware scans achieve approximately 99% accuracy in detecting zero-day threats and identifying client-side risks. These threats include:

- Obfuscated JavaScript injections

- Magecart-style skimmers

- Suspicious third-party script activity

- Data exfiltration patterns

Importantly, customer data is not used to train AI models. Detection models are trained on curated external malware feeds, preserving data privacy while strengthening detection capability.

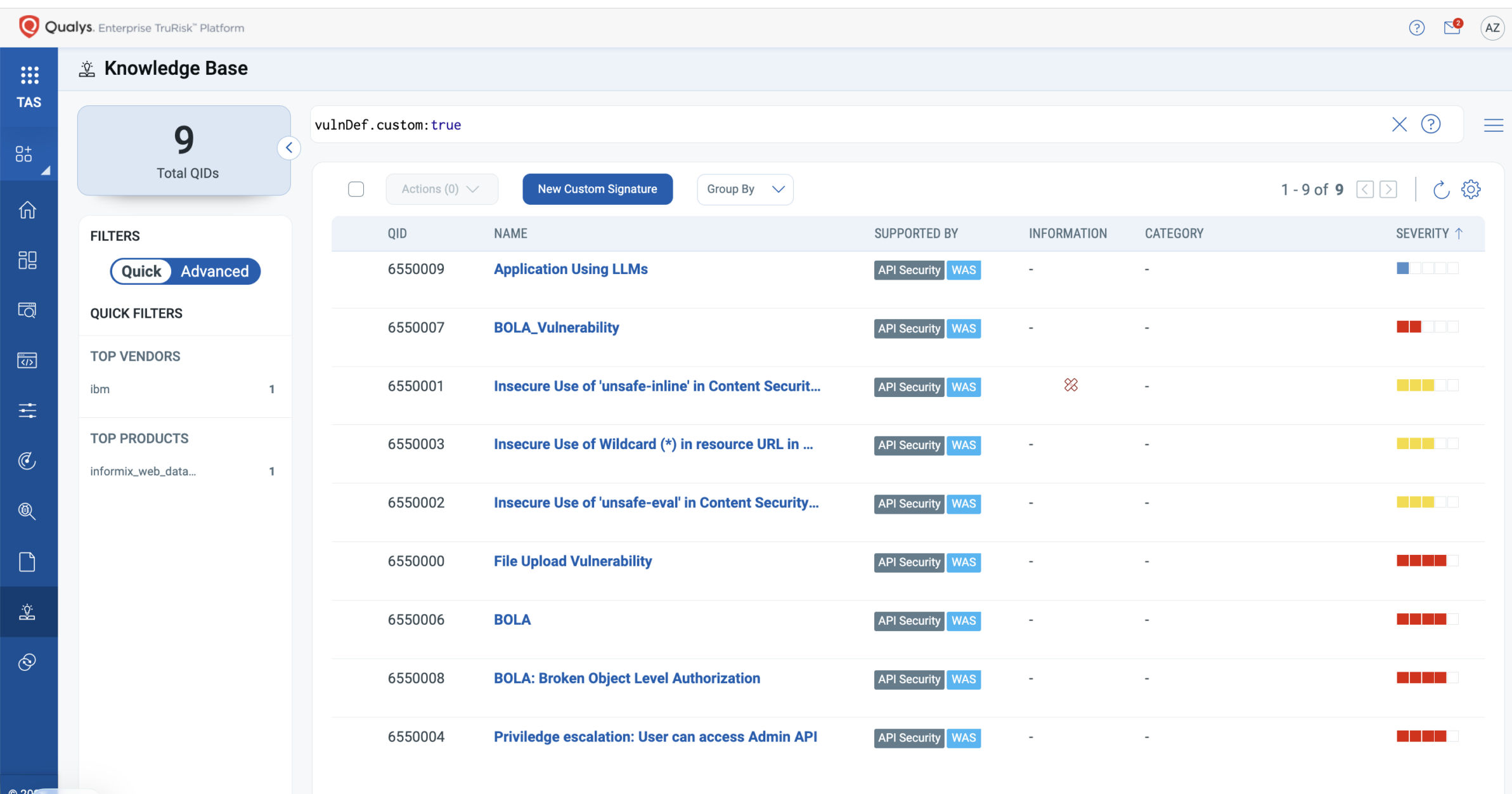

Discovering AI Usage in Web Apps and APIs

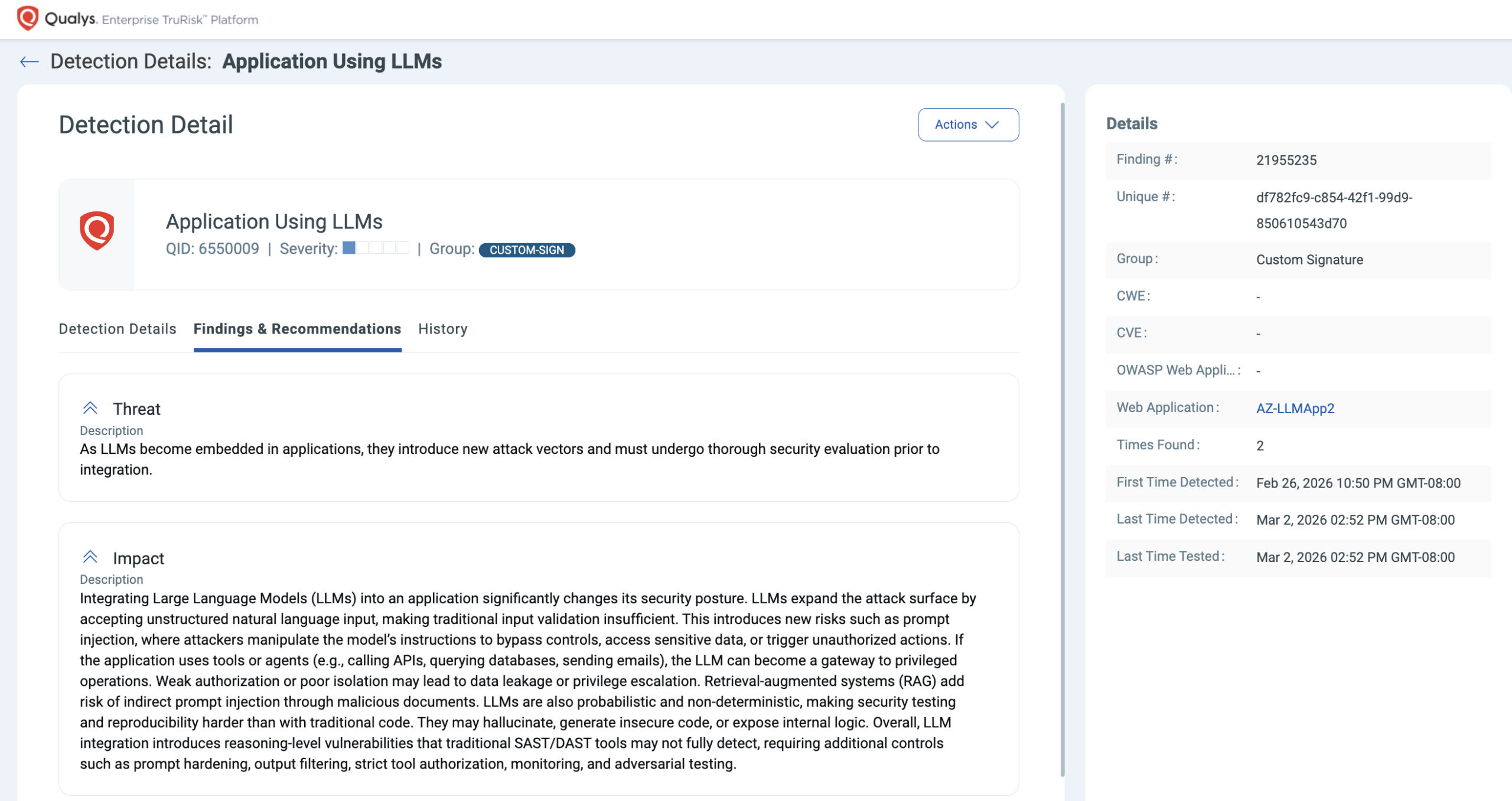

AI is also becoming part of the application attack surface itself, introducing new risks, including prompt injection, data leakage, model abuse, token exposure, and unauthorized inference usage. APIs may proxy prompts to third-party LLM providers. As organizations embed LLM-backed features into chat interfaces, workflows, APIs, and automation layers, security teams need visibility into where AI is actually present.

Qualys TotalAppSec addresses this through custom signatures to detect AI usage across applications and APIs. Teams can define targeted detection rules beyond the built-in vulnerability knowledge base, test custom signatures, detect AI usage, and trigger AI risk assessments for AI components. This way, organizations can look for AI-related response patterns, API endpoints, JavaScript artifacts, or request structures indicative of LLM integration and discover AI components that generic scanning may overlook. The value is practical: better visibility into where AI is embedded, better correlation with exposure context, more accurate risk assessments, and better governance as AI adoption expands across development teams. Custom signatures provide a scalable, systematic way to uncover and secure AI integrations.

The Future of AI in Application Security

The future of application security is unlikely to be defined by a competition between static scanners and reasoning agents. Instead, the discipline is evolving toward risk intelligence that combines continuous discovery, dynamic validation, and contextual prioritization.

Reasoning-based systems represent real progress in code analysis. They improve the ability to interpret complex logic, reduce noise in vulnerability detection, and assist developers during remediation. However, application security cannot be solved solely at the code level. It is equally an exposure problem across interconnected services, a visibility problem across distributed assets, and a prioritization problem within environments where thousands of findings compete for attention.

“AI will shape the next generation of application security by accelerating analysis and decision support, but durable outcomes will depend on disciplined risk evaluation across the full application attack surface. The organizations that succeed will be those that apply AI with discipline while preserving evidence, transparency, and operator control.”

Platforms that combine discovery, contextual risk analysis, and risk-based prioritization are beginning to reflect this direction. Qualys TotalAppSec demonstrates that by applying AI to unify application discovery, risk assessment, TruRisk™-based prioritization, and remediation guidance across web applications and APIs. In that integration of intelligence, context, and operational control lies the trajectory of modern application security.

See how Qualys TotalAppSec helps you discover exposed applications and APIs, validate runtime risk, and prioritize what truly matters.

Frequently Asked Questions (FAQs)

What are AI reasoning systems in application security?

AI reasoning systems analyze source code behavior, data flows, and execution paths to identify vulnerabilities that traditional static analysis tools may miss.

Why is source code analysis insufficient for application security?

Source code analysis cannot detect vulnerabilities introduced by runtime configurations, exposed APIs, cloud infrastructure, or live system behavior.

What is the application attack surface in modern environments?

The application attack surface includes internet-facing web applications, APIs, cloud services, and supporting infrastructure that attackers can reach or interact with.

Why is runtime validation important in application security?

Runtime validation confirms whether vulnerabilities are exploitable in live systems and reveals misconfigurations, authorization flaws, and abuse scenarios that static analysis cannot detect.

How does AI change the risk landscape for application security?

AI expands the attack surface by introducing model integrations, prompt-driven APIs, and new data flows, creating additional security and governance risks.