Securing the Hybrid Cloud: A Guide to Using Security Controls, Tools and Automation

When a bank recently created a consumer mobile wallet, it built the entire project — from development to deployment — in the cloud, an increasingly common decision among enterprises.

A less common step taken by this multinational bank and Qualys customer was incorporating the security team from day one. It recognized that the safety of the application was as critical for its success as its feature functionality.

In doing so, this bank tackled a challenge that organizations face as they move workloads to public cloud platforms: Protecting these new cloud workloads as effectively as their on-premises systems, but with processes and tools that are effective in both environments.

In a recent webcast, SANS Institute and Qualys experts addressed this issue in detail, offering insights and recommendations for security teams faced with protecting hybrid IT infrastructures’ assets on premises and in public clouds.

Cloud adoption triggers new security needs

In pursuit of digital transformation benefits, organizations are aggressively moving more workloads to public clouds, expanding from straightforward software-as-a-service (SaaS) applications to more involved platform- and infrastructure-as-a-service (PaaS and IaaS) deployments.

As this happens, InfoSec teams find that safeguarding these environments can be complex. “Security teams have rallied around the idea that this is something they need to live with,” Dave Shackleford, a SANS analyst and instructor, said during the webcast.

For example, having visibility into their workloads and assessing their security posture can be challenging, given that they have less control over these multi-tenant cloud platforms. InfoSec teams also need to figure out which new tools, expertise and staff they may need to acquire.

Recently, there have been many incidents where organizations have left cloud storage buckets unprotected, exposing confidential business and customer data publicly on the Internet. For example, The Los Angeles Times left an Amazon AWS S3 bucket open with read and write access. Hackers inserted malicious code into the newspaper’s website and used visitors’ browsers to mine cryptocurrency.

“We’ve got to do the due diligence, and it’s up to us to lock down this kind of stuff,” Shackleford said.

Steps to take

To properly adapt and map on-premises security controls and processes to public cloud environments, SANS recommends the following:

- Continually update risk assessment and analysis governance practices. That way, you can review cloud providers’ security controls, capabilities, and compliance status, as well as internal development and orchestration tools and platforms. They must also review operations management and monitoring tools, and security tools and controls both in-house and in the cloud. This should give security teams clarity into what controls are currently in place, how they’ll need to be modified, and what are the most pressing issues.

- Establish a set of configuration items to develop and maintain for cloud-based systems, including OS version and patch level; local users and groups; permissions on key files; and hardened network services.

- Scan and assess vulnerabilities continuously and throughout cloud instances lifecycles using either or both of these two methods:

-

- Relying on APIs to avoid manual requests to perform more intrusive scans on a scheduled or ad hoc basis

-

- Relying on host-based agents that can scan their respective virtual machines on a continuous basis, with reporting of any vulnerabilities noted

“If you’re looking at cloud workloads as these enormously dynamic, ever changing, environments, you’ve got to bake in a vulnerability management strategy from the definition of the environment in a completely automated way,” he said.

- Monitor complex, virtualized IaaS environments using a host-based tool whose agent reports to a management server, and send logs and events to a central collection platform. Logs and events generated by services, apps and OSes in the cloud environment can include:

— Unusual user logins or login failures

— Large data imports or exports to and from the cloud environment that weren’t anticipated

— Privileged user activities

— Changes to approved system images; to privileges and identity configuration; and to logging and monitoring configurations

— Access and changes to encryption keys

— Cloud-provider and third-party threat intelligence

Logging and event management aren’t new, but what’s new is the volume of logs generated in cloud environments. “It’s staggering, so people have to dig in there and go: ‘What do I really want to see here?’ And put some priorities around some of that,” Shackleford said.

- Adopt new “cloud native” security tools designed for these highly virtualized, multi-tenant public cloud environments. These security-as-a-service (SecaaS) tools integrate with cloud platform components via APIs, a new model for implementing security controls.

- “Shift left” and embed automated security controls and processes into DevOps environments, including code analysis and testing; logging and event monitoring; configuration and patch management; user and privilege management; and vulnerability assessment.

In this manner, DevOps becomes DevSecOps, with security integrated throughout the software development and delivery pipeline. In the DevOps and “infrastructure as code” world, everything is software-defined, including servers (mostly VMs), containers, application stacks, networks, and access models. “Security needs to be defined in this way, as well,” Shackleford said.

Automated controls that security teams need to implement for each phase of the DevOps pipeline include the following:

— Static scanning as development and code check-in is performed

— Dynamic scanning and testing during the building and testing phases

— Configuration implementation with approved templates and “infrastructure as code” definitions

— Monitoring of deployed instances through installed agents or continuous scanning within the cloud environment

— Assessment of container images in the registry and as promoted/launched in production

- Because containers require access to code repositories to install and configure software packages, security teams need tools that can scan container environments, as well as test the container daemon and its configuration, validate the containers running on the container host, and review the container security operations.

- Avoid silos of controls and point solutions from a single vendor or narrow cloud-native options. Instead, security teams should consider flexible and extensible SecaaS offerings for implementing controls in one or multiple cloud platforms.

“Hybrid cloud is the move. That’s where we’re all headed,” he said.

Securing hybrid IT environments with Qualys

The Qualys Cloud Platform and its suite of security and compliance Cloud Apps are ideally suited for protecting cloud and container DevOps pipelines, where the web apps that power digital transformation projects are created.

“Digital transformation is being powered by IT innovation, and security can’t be an afterthought,” said Chris Carlson, Vice President of Product Management at Qualys. “We need to bake security into this new infrastructure.”

With Qualys, you can integrate and automate security throughout the DevOps process — planning, coding, testing, releasing, deploying, monitoring — and build it into the software lifecycle instead of bolting it on at the end.

That way, vulnerabilities, misconfigurations, policy violations, malware and other safety issues can be addressed before code is released, reducing the risk of exposing your organization and your customers to cyber attacks.

“If you can get a handle on this, you’re better prepared as your IT counterparts are innovating very quickly in terms of their technology choices,” Carlson said.

Qualys Cloud Apps, such as Vulnerability Management (VM), Web Application Scanning (WAS), Policy Compliance (PC) and Container Security (CS), along with Qualys sensors, can be integrated and orchestrated via APIs with DevOps pipelines.

The Qualys Cloud Platform sensors – available as physical and virtual scanners, and as lightweight agents – are always on, remotely deployable, centrally managed and self-updating, enabling true distributed scanning and monitoring of all areas of today’s hybrid IT environments

Specifically, the Qualys Cloud Agent extends security throughout an IT environment, by working where it’s not possible or practical to do network scanning, including in static and ephemeral cloud instances.

The Qualys Cloud Agent consumes minimal CPU resources, collects only metadata, and delivers multiple security functions, saving security teams from having to deploy and manage multiple agents with narrow capabilities.

How Qualys secures DevOps in public clouds

After integrating Qualys into their DevOps pipeline, organizations obtain a clear picture of the vulnerabilities and mis-configurations of their OSes and web applications. They’re also able to remediate these security problems before launching an app into production. And by placing the Qualys Cloud Agent into the DevOps environment, they obtain continuous monitoring.

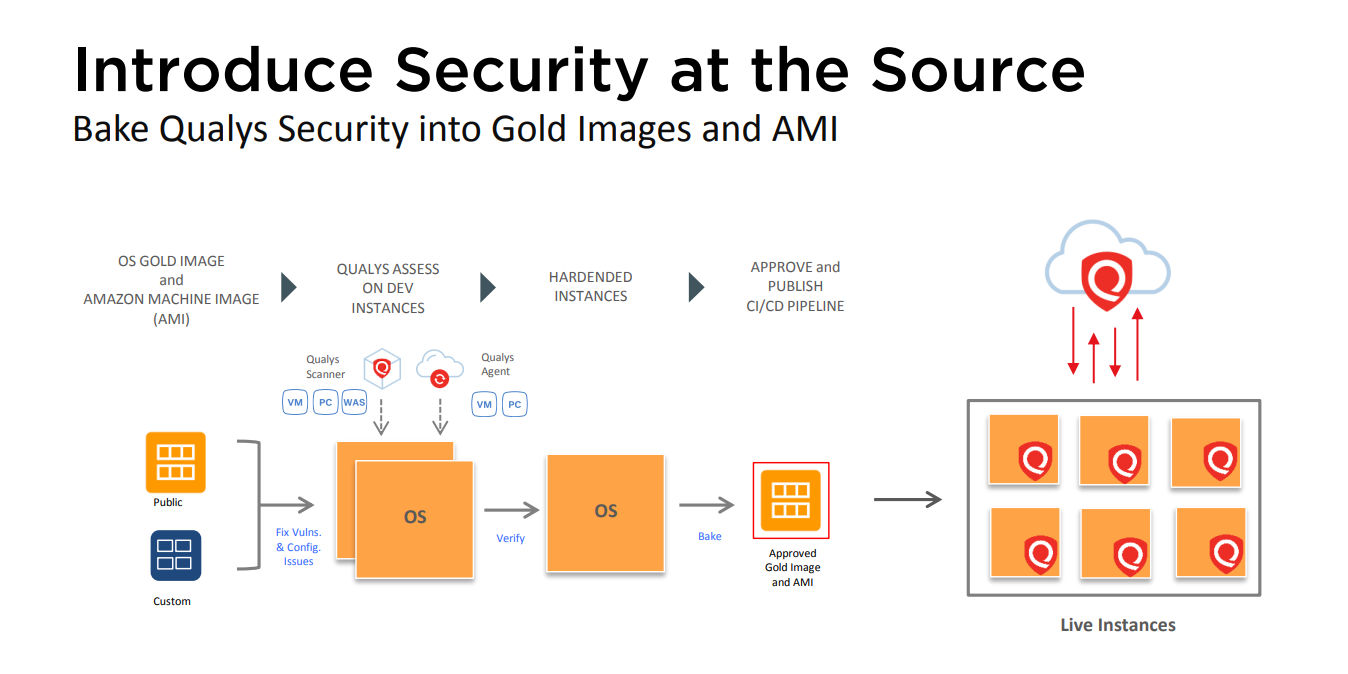

For example, in an AWS environment, after creating an AMI (Amazon Machine Image), sample instances are spun up and Qualys scans on them. After identifying and fixing the vulnerabilities and mis-configurations, the security team ends up with a hardened AMI base instance, where they place a Qualys Cloud Agent on it, and release it to production.

Qualys functionality for vulnerability management, policy compliance and web application scanning is supported via REST APIs so you can programmatically integrate it with your DevOps tools.

Once AMIs have been released live, Qualys monitors and tracks their security posture via dynamic and interactive dashboards. There you can search and tag instances based on attributes, and use pre-built or custom widgets to monitor deployments, all via a “single pane of glass” UI.

In addition, the Qualys Cloud Connector for AWS continuously discovers instances and collects their metadata including AMIs using API integration. As with AWS, Qualys has similar native integrations with Microsoft Azure and Google Cloud Platform.

How Qualys secures containers in DevOps

Docker containers churn much faster than virtual machines, and are much more lightweight because, unlike VMs, they can be spun up without provisioning a guest OS for each one. This is why they’re so popular in DevOps teams, as they let developers create and deploy applications more quickly and efficiently, and with an increased level of portability.

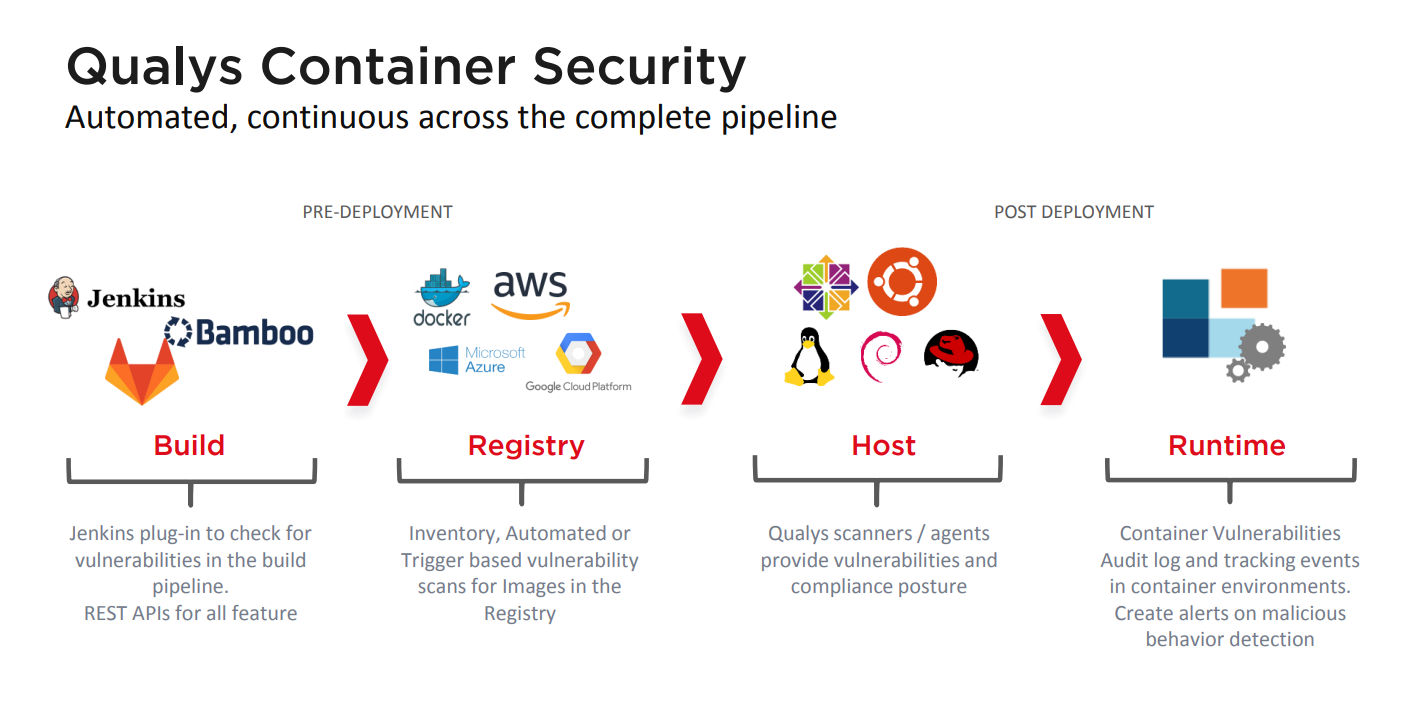

Qualys Container Security helps InfoSec teams with continuous discovery, tracking and protection of containers in DevOps pipelines and deployments at any scale, according to Carlson. Specifically, Qualys CS offers:

- Discovery, inventory, and near-real time tracking of container events

- Vulnerability analysis for image registries and containers

- Event and change tracking

- REST APIs integration

Qualys CS also features a native container sensor, which is distributed as a Docker image. Users can download and deploy these sensors directly on their container hosts, add them to the private registries for distribution, or integrate them with orchestration tools for automatic deployment across elastic cloud environments.

Qualys Container Security provides organizations with automated, continuous protection across the complete pipeline chain, including the build, registry, host and runtime environments.

About that mobile wallet app …

Let’s circle back to the large bank’s mobile wallet, and how security was integrated into its development and deployment using Qualys. “IT and security partnered from the beginning, and leveraged each other’s technologies,” Carlson said.

From the DevOps side, the app was born in the cloud, with everything in AWS: planning, testing, regression, staging, build, deployment, and production. Complete new builds of the app are produced and deployed into AWS every 60 days.

- To keep up this speed, they’ve created automated regression and test-driven development so they can quickly and easily find any functional defects created or introduced between builds.

- Docker containers are used to abstract the app from the OS, which lets the bank iterate on the apps faster without being constrained by dependencies in the underlying OS.

Meanwhile, the security team transparently integrated vulnerability and compliance assessment into the DevOps process from the first day:

- Code vulnerabilities are fixed in the same software-release cadence. The bank checks for vulnerabilities in both commercial and open source software used in the project.

“They can find and fix those vulnerabilities, and verify they’ve been properly fixed on the same release cadence. They don’t wait until the app goes into production to do an assessment after the fact,” he said.

- The automated regression for functional testing is also applied to more quickly find issues with patches and vulnerability remediation.

- Because they’re using Docker containers, IT teams can apply security patches to OS vulnerabilities at a separate cadence from application vulnerabilities, and patch them separately without worrying that patches to one will break the other.

Consequently, this app ships with much fewer severe vulnerabilities than average legacy apps. And the mobile wallet app vulnerabilities that do make it through into production — as well as the newly disclosed vulnerabilities that impact production applications — are patched much more frequently and consistently than in legacy apps, driving towards the goal of “zero vulnerabilities”.

For many more details about this topic, please listen to a recording of the webcast “Securing the Hybrid Cloud: A Guide to Using Security Controls, Tools and Automation”, and download a copy of the accompanying white paper: “Securing the Hybrid Cloud: Traditional vs. New Tools and Strategies”