Pebble Smart Watch Developer Portal Vulnerability

Table of Contents

Here’s a short story about a simple vulnerability that was easy to fix, but nonetheless could have had serious consequences.

Here’s a short story about a simple vulnerability that was easy to fix, but nonetheless could have had serious consequences.

Imagine an attacker, who doesn’t even have root access, being able to:

- Get source code from the community of Pebble watch developers

- Replace their binaries with malicious ones

- Deploy the malicious binaries to the developers’ watches when they click the ‘Remote Deployment’ button.

The above was possible (until Pebble made a quick fix — kudos to them!). And Pebble is not alone: researchers at Black Hat and DEF CON this year demonstrated a wide array of device hacks. The lesson for developers is to always include secure coding practices and testing in your software lifecycle.

About Pebble

Pebble is a well-known player in the expanding wearables (smart watch) market. One of their key strengths has been their apps market which currently has more than 6000 apps and watch faces. In 2013 Pebble launched the cloudpebble.net portal where developers can code, build and remotely deploy apps to their smartwatch without installing any SDK on their machines.

The Vulnerability

While building a Pebble watch app through cloudpebble.net, I observed that the build logs contain output from build commands run on the Linux shell. I was interested to check if I could inject a custom command during the build process and get its output from the build log. After a few tries, I was able to successfully demonstrate the attack. Following Qualys’ responsible disclosure policy, I contacted Pebble and provided details of the attack. Pebble acknowledged the issue and provided a fix within 6 hours, which was quite impressive. As a token of appreciation they added me to their ‘White Hat Hall Of Fame’.

Proof of Concept

Following are the details about how I was able to carry out this attack.

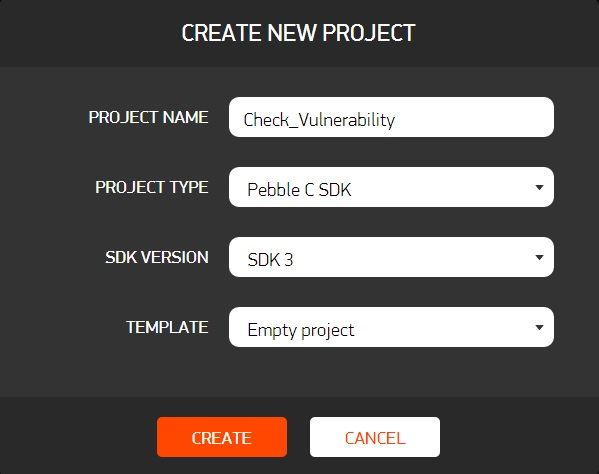

- I created a cloudpebble.net account, logged in at https://cloudpebble.net/ide/, and created a new project with the configuration shown below.

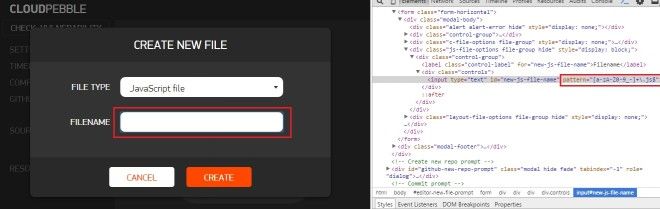

- I created a .js source file under this project. The file name field just had client side validation, and surprisingly there was no server side validation.

I removed the client side validation and tried adding different commands in the filename string.

- I was successfully able to execute different commands on this server, and the output of a few of the commands was getting dumped to the buildlog.txt file.

After a few tries, I figured out that I could get the system to dump the contents of the /etc/passwd file if I used filename “a.js | wget –I /etc/passwd | a.js”. While this resulted in a build failure, buildlog.txt was available and held the contents of the /etc/passwd file. This meant I could log in as any other developer on this build system and access and change their source and binary files.

- The cloudpebble.net website also provides a remote deployment facility. Using this feature, files built on this server in a specific developer’s account can be automatically installed on that developer’s Pebble watch. Since I could log in as any developer, I had a very powerful attack.

The Fix

Fixing this issue was straightforward. The Pebble team simply added server side validation to the file/project name creation pages, along with existing client side JavaScript validation. Now even when someone disables the JavaScript validation in their browser, the server still won’t accept the invalid file name.

For an extra level of safety, developers should disable the ‘Developer Connection’ option in the Pebble Mobile Application, except when they are trying to deploy and test their applications using cloudpebble.net.